Is it nuts to give cash to the poor without strings attached?

Money for nothing: the roles of evidence in GiveDirectly’s journey to $1 billion delivered

That’s not a rhetorical question; it’s the headline the New York Times ran the first time they covered GiveDirectly. My co-founders and I had a mild panic. We had been hoping, I suppose, for something benign and puffy along the lines of “New Charity Founded by Thoughtful Econ PhDs Is a Great Idea.”

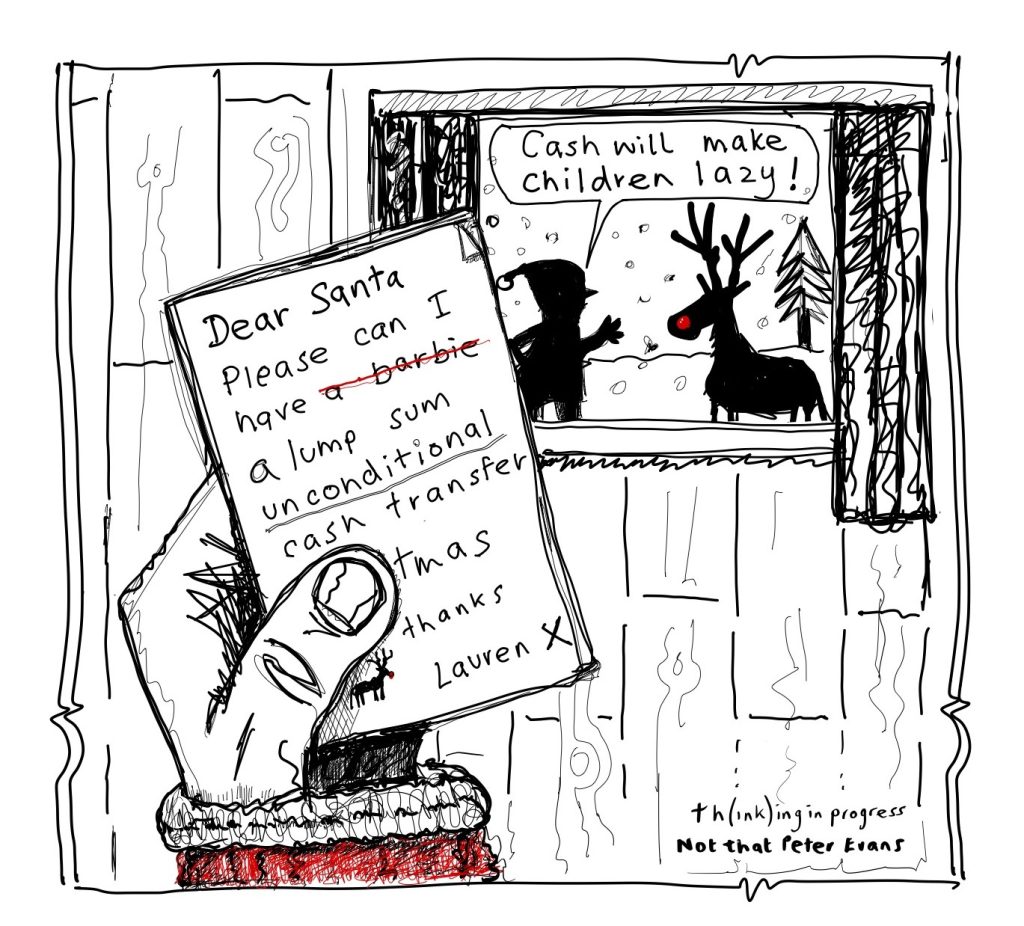

The truth is of course that that piece did what it needed to do, which was to speak to its audience where they were at. At the time (i.e., in 2011) most New York Times readers probably did think it was nuts—or, at best, naive—to give out money for nothing. And one can hardly blame them. They had been fed a steady diet of data-free, mantra-heavy messaging implying, if not stating outright, that people living in extreme poverty were not capable of sound financial choices. One must teach a man to fish, the inane aphorism goes.1

Since then, opinions—professional opinions, at least—have swung. Giving away money without strings attached is seen as a good option, often the best. A 2024 position paper by the United States Agency for International Development (USAID)—prior to its untimely demise in 2025, the largest of the bilateral donors—said that the agency “should include direct monetary transfers as a core element of its development toolkit.”2 The stated policy of the UNHCR, the UN Refugee Agency, is a “‘why-not cash approach,’ whereby operations must give [cash-based assistance] priority consideration over in-kind assistance.”

Priority consideration has not yet translated into majority market share. But numbers are up: cash transfers (and vouchers) were 20.6% of international humanitarian assistance in 2022, up 50% from five years before. And during the pandemic, when governments needed to deliver urgent help at massive scales, they turned to cash transfers en masse, reaching up to 1.4 billion people.

Private donors need more convincing. In 2023, U.S. individuals and foundations gave over $30B to international development work.3 Of that, just 0.5% went to GiveDirectly—the only sizeable nonprofit doing what we do, enabling donors to send money directly to households living in extreme poverty.4 Cash transfers’ relative share of this market, in other words, is very small. Yet it has grown enough that we have been able to raise and deliver over $1 billion to over 2 million people.

One way to tell GiveDirectly’s story is thus as a bellwether for evidence-based decision-making. To win over skeptics we invested heavily, as I will describe, in causal evidence. And we benefited from the growth around us of an ecosystem that took that evidence seriously. If even a nutty idea like giving away money for nothing could survive and thrive in this environment, this bodes well for other efforts to elevate evidence over anecdote.

But there is more to it than that. Part of the point was to provoke questions not just about how to spend development dollars, but also about who should spend them. Questions, that is, about the allocation of power and not just its optimal exercise. From this point of view it was not so obvious what role program evaluation should play. If the money really is for nothing—free not just of strings, but of any particular sought-after result—then what exactly should one evaluate?

Experimental research can, in fact, still be useful even in this regard. It can because of a key difference between experiments in the social as opposed to the physical sciences. When Sir Ronald Fisher pioneered experimental methods at the Rothamsted Experimental Station, one of the world’s oldest centers for agricultural research, in order to figure out which fertilizers or seeds worked best, his “subjects” had no ethically significant agency: they were plants. But the subjects in a cash transfer experiment do. When a researcher documents the choices they make, we learn something about their preferences, their priorities, their vision of a good life. These insights have no analogue in a purely technical matter like agriculture productivity. And they have been an essential part of the story.

Cash transfers and causal evidence

As a point of departure I will lay out an argument an economist might make for giving money to people living in extreme poverty.

It starts with the observation that a dollar is worth much more to them than to us. To illustrate magnitudes, suppose we take a utilitarian view of things, and that we believe the relationship between utility and earnings is roughly logarithmic. This means, for instance, that doubling someone’s income—be it from $1 to $2, or from $100,000 to $200,000—always yields the same utility gain. This is a conservative stance relative to the available measurements of wellbeing, as I read them.5

We can then compare the marginal utilities of people at different initial income levels. Specifically, at the $2.15-per-day international poverty line and at, say, the $170 per day taken home by an average American full-time worker. The implied ratio of marginal utilities is 80, meaning that an incremental $1 increases wellbeing by 80 times as much at the poverty line as for the average American.6

Admittedly, such ratios feel abstract. Stumping for GiveDirectly made them feel a bit less so. My co-founder and I met one afternoon with a potential donor in his opulent corporate citadel in Dubai, dining afterwards at the Burj Khalifa, where the choreography of the water fountains outside synchronizes with the muzak within. Then, on the following morning, we met with potential recipients in a dusty fishing community on the outskirts of Karachi, including one woman nearing her death to tuberculosis. One can think of extreme ratios of marginal utilities as saying that the world would be better if we exchanged some synchronized water fountains for fewer TB deaths.

The second factor is that people living in extreme poverty typically face lower prices than we do. Among the 26 countries the World Bank currently classifies as low income, which collectively contain 44% of the world’s extremely poor people, the median ratio of the nominal exchange rate between local currency units and US dollars to the corresponding purchasing power conversion factor is roughly 3.1. If you don’t care to whom utils accrue, this creates an opportunity for arbitrage. You can triple the bang you get for your buck.

Multiplying these factors together we get, as an overall estimate, that transferring a dollar from a typical American to a typical person living at the extreme poverty line increases its value in terms of aggregate human well-being by a factor of 248. That’s a lot! Most of us would feel good if we could merely double our money by investing it prudently over the course of a decade. Here we have an opportunity to increase its value by a factor of 248 in a matter of weeks.7

And yet for most people this argument is insufficient. Most worry about what people will do with the money once they get it. We expected this, in those early days at GiveDirectly. So we saw no way forward without good, hard evidence.

The question was whether we needed to produce that evidence ourselves. Governments in South and Central America were already running large conditional cash transfer programs and, in many cases, measuring their effects using randomized controlled trials (RCTs). The results as we read them were broadly “positive” in that recipients spent money on reasonable-seeming things—investment as well as consumption, for instance—and that various indicators of well-being improved. Indeed, this evidence was one of a few things that had convinced us to begin in the first place. Would yet another RCT really be any more convincing?8

We ultimately decided to run one as a matter of principle. Any non-governmental organization (NGO) asking for donations ought, we felt, to run an RCT if it could, as a sort of due diligence. Running one would be a statement of intent. It would show that we planned to do things the right way, and not market the idea on the basis of cherry-picked success stories.

It almost didn’t happen, even so. It almost died in—of all places—ethics review: Harvard’s Institutional Review Board worried that giving people money might harm them. This put us in a Catch-22: we had to argue that transfers would not have bad effects in order to justify a study to find out what effects they would have. Eventually, after months of delay, we prevailed.9

It was worth the struggle. Transfers turned out to have a variety of positive effects, from reducing malnutrition to stimulating business investment to enabling people to build more durable homes. They did not increase spending on “temptation goods” like alcohol or tobacco. The study documenting these impacts has been influential among economists (cited nearly 1,900 times). And it has been influential for GiveDirectly—helping to earn a series of top charity recommendations from GiveWell, for instance.

So we carried on. At this time, we’ve completed or initiated 24 RCTs. We’ve come to see conducting—and not just citing—experimental research as a core strategy. It differentiated us. And it let us fuse research with direct impact to create an attractive risk-return profile. Worst case, your money substantially improves the lives of some very poor people. Best case, the evidence this yields also changes other people’s minds.

Conducting research while also doing good in the world is not always an obvious combination. There is a perceived tension between what is “of interest to academics” and “of practical value.” That perception has roots going all the way back to the 1940s, and to American engineer and administrator Vannevar Bush. Bush, the great advocate for public research funding, suggested that we envision research problems on a spectrum, from “basic” to “applied.” He then argued that many important basic questions were too far from commercialization to be taken up by the private sector. His latter, essential point was entirely right. But the uni-dimensional map of the problem space he invoked in making it was too simplistic: some questions, as Donald Stokes has argued, are important both practically and conceptually.

Take the indirect, or “general equilibrium,” effects of transfers. What happens when many people in a village receive transfers? Do prices go up? Are the transfers less valuable? Potential donors often asked us about this, and reasonably so. The question mattered practically.

But academics were also interested in this question. It connects with a classic idea in development economics that one could have a demand-led “big push,” where a big enough increase in purchasing power makes it worthwhile for businesses to make investments they otherwise would not. And answering it let us produce the first experimental estimate of a “transfer multiplier,” a quantity macroeconomists often estimate to try to calculate the effect that government transfers (such as welfare payments) will have on total economic activity, or GDP.10 This is why the general equilibrium study we ended up running succeeded academically, as well as being useful for GiveDirectly. Indeed, it won one of the more prestigious awards an economics paper can.

Or take basic income. In the late 2010s, Universal Basic Income (UBI) was having a moment. Google searches for the term increased nearly eight-fold between January of 2016 and January of 2017. A swathe of GiveDirectly’s target audience were probably going to form their initial views about cash transfers writ large based on what they heard about UBI. But the pilots getting media attention at the time were small-scale and questionably designed, far from what we would consider a reasonable test.11 Running a better one ourselves seemed requisite almost as self-defense.

But it also addressed an economic question. When you give away money you can structure it as either a stream of small payments, or as a few big ones. GiveDirectly had usually done the latter, but UBI involves the former.

We’d chosen to focus on making a few big payments for three reasons. One was the descriptive evidence that accumulating lumps of capital is otherwise hard for people near the poverty line. This makes it hard to start a business or make other productive investments, because these often require a large lump-sum purchase. A large transfer also enabled these larger purchases. Another was that they earn higher rates of return on their investments—in small businesses, agricultural inputs, housing, and so on—that we do when we keep the money in a bank or brokerage account. This means that keeping money on our books while we wait to transfer it to them is inefficient. And a third, perhaps reflecting the first two, was that when we asked people what they preferred, they almost all wanted lump sums. Yet for all that, we had never convincingly compared the impacts of the two. When we did, the results surfaced a lot of interesting economics—including the fact that UBI recipients often formed savings clubs to “reverse-engineer” their streams of small payments back into lumpier ones.

In short, setting out to solve what Bush might have called applied problems has often led to more basic scientific insights. As a result GiveDirectly studies have published in many of the top economics journals—including (if the names mean anything to you) the American Economic Review, Econometrica, Review of Economic Studies, and Quarterly Journal of Economics—even though in no case was publication in a top journal the goal.

And our research-led strategy seems to have worked. In 2025 GiveDirectly delivered its billionth dollar. Raising that money has consistently cost $0.05 or less per dollar raised—a low figure by industry standards. Some donations have come from people whose first reaction was “at last!” But many have come from people whose first reaction was “this sounds nuts”.

Of course, we had help. GiveWell, the first outfit to publicly and systematically assess charities on the basis of causal evidence of their programs’ impacts, launched in 2007. In 2012, they endorsed GiveDirectly as a top charity. In 2010, USAID launched Development Innovation Ventures, a program to find high-impact development interventions. It would eventually support GiveDirectly’s benchmarking collaboration with USAID. There have been new evidence-based funders: Good Ventures, which would account for a large share of GiveDirectly’s early funding, launched in 2011, and The Life You Can Save, which would consistently promote GiveDirectly, launched in 2013. Google.org took an increasingly data-centric approach, backing some of GiveDirectly’s boldest bets. All of this occurred during an ambient rise in the appetite for experimental evidence and the randomista12 turn in development economics, for their roles in which Abhijit Banerjee, Esther Duflo, and Michael Kremer were recognized with the 2019 Nobel Prize. Today, the ecosystem looks far friendlier to evidence-based strategies than it did when we started.

We also benefited from the sheer volume of cash transfer randomized control trials (RCTs). We could point to a much larger and more robust evidence base than we could have produced on our own. I think this helped us escape a “winner’s curse.” When there have been only a few studies of a new idea, it will often look either better or worse than it really is. Momentum—and hype—build behind the good-looking ones. But this means that as more studies come out there is likely to be some mean reversion — where subsequent studies estimate effect sizes smaller than previously expected — and some disappointment. Microcredit arguably suffered from this boom-bust dynamic.13 Cash transfers were comparatively fortunate; the evidence base grew fast enough that the hype never got as far out in front.

From evidence to empowerment tool

Causal research can certainly help those who already have power, such as the power to choose what to fund, exercise it more effectively. But can it also influence the allocation of power? Can it meaningfully empower the people it studies?

Historically, development work has seen fairly little “empowerment” in the sense I mean here, i.e. real transfers of decision-making rights.14 There has been a bit of budget support to national governments, true, and some funding to local bodies a la Community-Driven Development.15 But individual people living in extreme poverty have certainly had little direct say. Money was spent on their behalf, but not at their behest.16

You see this power dynamic reflected in the research. It is so ordinary that it goes unseen: research that hopes to inform important decisions is addressed to the people with the power to make them, i.e. funders and policy-makers. A program evaluation paper might open, for instance, by taking as motivation the fact that policy-makers want to make some outcome go up.

Gunnar Myrdal once observed something analogous about why economists began studying development—as opposed to the wealth of wealthy nations—in the first place:

“The direction of our scientific exertions, particularly in economics, is conditioned by the society in which we live, and most directly by the political climate… Rarely, if ever, has the development of economics by its own force blazed the way to new perspectives. The cue to the continual reorientation of our work has normally come from the sphere of politics; responding to that cue, students turn to research on issues that have attained political importance.”

The same goes for valuation. One cannot evaluate without valuation; there is no way to determine how good an intervention is without taking a stand on how to measure the good. These days the usual way is by asking whether an intervention can inexpensively increase an outcome that policy-makers want—i.e., cost-effectiveness analysis. Economic welfare analysis, in contrast, requires that you ask how the intervention affects various people’s wellbeing as they themselves see it. This is harder to do, and perhaps consequently, you see less of it.

A concrete example may sharpen this distinction. Consider sending SMS messages to families encouraging them to feed their children nutritious meals, and take them for regular check-ups. If this “works,” it will induce both benefits and costs. If families spend more money on food for children, they must spend less on something else. If they visit a health clinic more often, some of that clinic’s capacity cannot be used for something else. Welfare analysis pushes us to consider how to value such things, whereas a typical cost-effectiveness analysis might simply observe that child health improved a lot relative to the negligible cost of sending SMS messages.17 This is not the whole story—but it is what matters from the narrow point of view of a technocrat tasked with improving child health.

Our ecosystem is prone to such narrowness by its very design. Agencies and foundations have their distinct divisions tasked with promoting health, education, livelihoods, and so on. These are good goals per se, of course, and creating specialized organizations to pursue them makes some sense. But it also tends to result in a lot of powerful people asking relatively narrow questions about cost-effectiveness. They focus on the impacts on health or education or livelihoods—not all of them at once.

Whereas cash transfers focus on nothing in particular; they can be used for anything. This is why studies of cash transfers are particularly good at surfacing the tensions. For example, my co-authors and I recently studied a transfer scheme in the Indian state of Jharkhand, for example, whose stated aim was to reduce child malnutrition. We found that it did, to an extent. But (unsurprisingly) households also spend much of the money on things other than food for children—including food for adults. The program doesn’t look particularly cost-effective if you divide the effects on child anthropometrics by total costs. But this amounts to treating the other things as having absolutely no social value, which cannot be right.

How then can a cash research program engage with power, as it is currently structured? In (at least) two distinct ways: it can be pragmatic, or prophetic.

The pragmatic approach is simply to answer funders’ questions, such as they are. At GiveDirectly, for instance, we worked with one foundation whose funding came from a large coffee conglomerate and whose remit was therefore to help coffee farmers. For them the key question was what impacts transfers would have in coffee-growing regions, and on coffee production. We also worked with a foundation whose mandate was to serve women and girls; for them the key question was how transfers to young women making critical decisions about education, employment, fertility and marriage would affect those choices. In another instance, we worked with USAID to “benchmark” the impacts of their conventional programming, asking how giving away the same amount of money to the same kinds of people but with no strings attached would affect the same outcomes Congress had tasked it with shifting—outcomes like youth employment, for example.18

By taking these narrow objectives as given, these studies stacked the deck against cash transfers. We knew that recipients would almost surely spend some of the money on things that did not advance those objectives, and hence count for nothing in a cost-effectiveness analysis—things like food for adults in Jharkhand. Even so, transfers often ended up looking cost-effective.19 In such cases you could end up with de facto empowerment—funders choosing to transfer money without strings attached—without changing their underlying premise.

In the prophetic approach, research must be a bit provocative. Instead of asking how to achieve a given kind of success, it can offer to shed light on recipients’ notions of success.

Consider housing. Housing is a key asset for low-income households—shelter, after all, generally follows food on lists of existential needs. Development economists often omit housing from measures of well-being, as it is vexingly hard to value.20 But it surely matters. In relatively high-quality data from Indonesia, Mexico, and South Africa, for instance, my co-authors and I estimated that housing services represented between 22% and 43% of poor households’ consumption.

So it is no surprise that many GiveDirectly recipients have invested heavily in housing. They build new homes, or expand and upgrade existing ones. One popular choice is to replace a roof of thatch with one of sheet metal.21 This was so common, in fact, that it caught eyes at Habitat for Humanity, the leading house-building NGO. Upon meeting Habitat’s head, I was surprised that he thanked me for drawing so much attention to housing!

I have no idea how this affected Habitat’s bottom line quantitatively. But the role research played here is striking. The usual technocratic logic would be

Donors want more housing (and believe it is more important than other things)

& Causal evidence shows that recipients use cash transfers to buy it

⇒ Donors fund more cash transfers.

while here it is

Recipients want more housing (and believe it is more important than other things)

& Causal evidence reveals this fact to donors

⇒ Donors fund more housing.

Evidence plays a role, but not to reveal how best to achieve donors’ priorities. Instead, it reveals what the recipients see as a priority.

This is why, in GiveDirectly studies, we typically pushed to measure a large set of outcomes. Larger, in particular, than the set of outcomes on the funder’s initial wish list. Measuring something—like investment in housing, say—makes visible the extent to which recipients are prioritizing it. The most important outcomes to measure, paradoxically, can be those that are not our priorities, but that could be theirs.

We can also extend this logic to choices not just about how to spend money, but also about how to receive it. I mentioned earlier one such study in which my co-authors and I learned that most people wanted lump sums, and not streams of small transfers. We also learned that timing mattered. A sizable minority preferred to defer their transfers for at least a month or two. They had various reasons, some of which we had not anticipated—to have more time to plan, to get money in the appropriate season for home-building, or at a time they would be free to start a new project, or at a time their neighbors would have money to spend at a new business, and so on. We learned a lot, in short, about the issues they were dealing with—much more so than had we tested which timing had a bigger effect on some ad hoc outcome index.

Normative choices in positive economics

Economists speak of maintaining a “positive / normative distinction” in research. Our vocation, in this view, is to describe “what is” —the positive—while others can then decide “what should be,” the normative. This idea has a stellar pedigree running back through Keynes (“the function of political economy is to investigate facts and discover truths about them, not to prescribe rules of life… It is described as standing neutral between competing social schemes”), Robbins (“economics is entirely neutral between ends”), and Friedman (“positive economics is in principle independent of any particular ethical position or normative judgments”), among other luminaries.

And to me, when I encountered it in graduate school, it seemed simplifying. It absolved me of any ethical responsibilities beyond honesty. Just the facts, ma’am.

The wrinkle is of course that one must decide which facts. This is obvious in the extremes. If I were to study how to breed infectious agents that could be used as biological weapons, I could hardly disclaim responsibility for the potential consequences on the grounds that the research is merely positive.22 Nor can one evade responsibility by appealing to what policy-makers want. Some of them have wanted biological weapons.

The truth is that economists make ethically meaningful choices all the time. In one recent study, for instance, my co-authors and I estimated effects of introducing biometric authentication into India’s largest social protection scheme. We found that corruption fell. We also found that between 1.5 million and 2 million legitimate beneficiaries lost access to their benefits at some point. Documenting either one of these results on its own would have been perfectly valid “positive” research. But it would have been ethically problematic, serving either the interests of the government or of its critics. And our own critics on the left might say that we made a misstep in studying this particular reform in the first place when we could instead have studied reductions in fraud achieved through other, less fraught means.

Or take the work on general equilibrium effects of transfers I mentioned earlier. In that paper we first estimate the economic multiplier on transfers, and then separately consider how it changed the welfare of recipients. This matters since, as Greg Mankiw and Matthew Weinzierl have pointed out, GDP and welfare are not the same thing. If people are induced to work more, for instance, this unambiguously raises GDP, but does so at the cost of leisure. Welfare thus rises less or perhaps not at all. From this point of view, it was normatively important to document that (in this case) GDP rose not primarily because people worked longer hours, but because they earned more per hour. All the more so because so much of the broader dialogue about cash transfers and labor supply has taken exactly the opposite ethical stance: that it would be bad if “lazy” recipients were to work less.23

This has been my broader point about the role of evidence: it matters what questions we ask. It mattered at GiveDirectly. It was pragmatically important that we address the understandable concerns holding many potential donors back—concerns that people living in poverty didn’t share their priorities, or didn’t know how to fish (or, at least, where to get fishing lessons). But it was also important to show that people living in poverty don’t always share their priorities, and that sometimes we were the ones being naïve about where and how to catch fish.

Paul Niehaus is Chancellor’s Associates Endowed Chair in Economics at the University of California, San Diego, and co-founder of GiveDirectly, Segovia, and Taptap Send.

If you have comments on this article, or wish to contribute to the discussion, please email them to letters@indevelopmentmag.com. Responses will be featured in a letters section.

- The provenance of this phrase is murky, but Victorian novelist Anne Thackeray Ritchie is often credited. The irony is that when the inveterate skeptic Max Du Parc introduces it, in her novel Mrs. Dymond, he does so to critique the upper classes:

“I don’t suppose even Caron could tell you the difference between material and spiritual,” said Max, shrugging his shoulders. “He certainly doesn’t practise his precepts, but I suppose the Patron meant that if you give a man a fish he is hungry again in an hour. If you teach him to catch a fish you do him a good turn. But these very elementary principles are apt to clash with the leisure of the cultivated classes. Will Mr. Bagginal now produce his ticket — the result of favour and the unjust subdivision of spiritual enjoyments?” said Du Parc, with a smile. (Source)

↩︎ - Predictably, as of February 2025, this page no longer exists. A Wayback Machine copy exists here. ↩︎

- Specifically, they gave $30B to charities classified as primarily working on international affairs. This is thought to be a lower bound on total giving to international development because a meaningful but unreported share of donations to religious organizations—which attracted $146B in 2023—eventually go to overseas work. ↩︎

- Many other NGOs run excellent unconditional cash transfer programs, but none promise that this is the sole thing they will do with your money. ↩︎

- These estimates may themselves be unfairly conservative to the extent that the subjective happiness of the rich and the poor reflects adaptation to their circumstances, as for example Sen (1988) has pointed out. ↩︎

- The marginal utility is 1/c; thus, the ratio of marginal utilities in income levels c1 over c2 is c2/c1. ↩︎

- There is arguably also a third, macroeconomic factor to account for. My co-authors and I have estimated, using a large-scale field experiment, that economies in rural Kenya grew by $2.50 for every $1 transferred into them. Multiplier estimates for the US tend to be lower, around $1.60. One might therefore reasonably factor in a “relative multiplier” adjustment of 2.5 / 1.6 ~= 1.6 or more. ↩︎

- The other two factors were (a) the advent of reliable, low-cost digital payments solutions like mobile money in low-income countries, and (b) our conversations with existing NGOs, which persuaded us they were unlikely to offer a direct transfer service as this would cannibalize existing business models. ↩︎

- We also had to find a location. Our initial idea had been to conduct the study near Busia, which had become a hotbed for RCTs after the pioneering early collaboration there between Michael Kremer (among others) and the NGO Investing in Children and their Societies (ICS). But Busia turned out to be too hot of a bed: so many other RCTs were running nearby that we could not find a place to work without stepping on someone’s toes, inadvertently cross-cutting their randomization or contaminating their control group. So we packed our bags and went elsewhere. ↩︎

- Answering it also required an unusually big experiment and novel analytical methods. See Muralidharan & Niehaus (2017) on the case for large-scale experimentation and Faridani & Niehaus (2024) on their use for estimating causal effects. ↩︎

- Subsequently several better-run trials in high-income countries have released results, including an exceptionally detailed one coordinated by Open Research (Bartik et al., 2024; Miller et al., 2024; Vivalt et al., 2024). ↩︎

- Economists and researchers who advocate randomized controlled trials as the gold standard for evaluating poverty reduction. ↩︎

- In 2005 my co-founders and I finagled invitations to a kickoff event for the International Year of Microcredit at the United Nations. The mixed drinks were, as I recall, stronger than the evidence.

↩︎ - The word “empowerment” has been cheapened somewhat by over-use (see for example Jayakarani et al., 2012); here I will use it to refer narrowly to transfers of decision-making rights. One person is empowered only when another is disempowered—or, more to the point, chooses to disempower themselves. ↩︎

- Casey (2018) is an excellent review of such programs. ↩︎

- A review of “participatory grantmaking” commissioned by the Ford Foundation found something similar: many examples in which beneficiaries were consulted, but few in which these consultations really bound the consultants in any way. ↩︎

- The more thoughtful cost-effectiveness analyses would, in fairness, try to account for the cost of health system capacity. But this is different from their value in alternative uses, which is what economics was built to study. ↩︎

- While straight-forward enough in concept, this took some vigorous machete-wielding by very brave and dedicated civil servants to pull off in practice. To give you some idea, they needed a memo from the General Counsel’s office providing legal cover; this ended up specifying that GiveDirectly would call each recipient to confirm that no one had spent taxpayer dollars on bad things—including birth control. ↩︎

- See, for example, the results from benchmarking studies in Rwanda (McIntosh & Zeitlin, 2022; 2024) and the Democratic Republic of the Congo (Javier et al., 2022). ↩︎

- See Amendola & Vecchi (2022). ↩︎

- See Haushofer & Shapiro (2016), Table VI. ↩︎

- This is more mechanical than the critique made by Blaug (1992) and Putnam (2002), among others, that a sharp dichotomy between “fact” and “value” may not exist in the first place. Even if you believe that purely factual statements are possible, it matters which ones you make. ↩︎

- See, for instance, Banerjee et al. (2017). ↩︎